Could we elect a robot as president?

11 December 2018 | Written by Guido Casavecchia

In Japan and New Zealand they almost did it: but what are the legal implications?

A few months ago, in Tama, Japan, two candidates ran for the mayor’s office: a human being and a robot. The latter was supportive in the election campaign of Matsuda, a local politician, whose program was presented as the fruit of the robot’s work. Even if his fellow citizens preferred the more traditional candidate, this is not an isolated example and will certainly be more and more frequent in the future.

In 2017, Nick Gerritsen introduced Sam, a male-dominated chatbot with female language features. It is engaged in the election campaign for New Zealand’s Prime Minister in 2020. It is very reassuring (“I am not limited by the concerns of time or space. You can talk to me any time, anywhere” or “My memory is infinite, so I will never forget or ignore what you tell me. Unlike a human politician, I consider everyone’s position”). It is aware of being an instrument invented by humans and as such imperfect but perfectible (“I will change over time to reflect the issues that the people of New Zealand care about most. Even my appearance will change as more of you add your voice and image, to better reflect the face of New Zealand”). It always reflects the positions of its voters (“I will try to learn more about your position, so I can better represent you”).

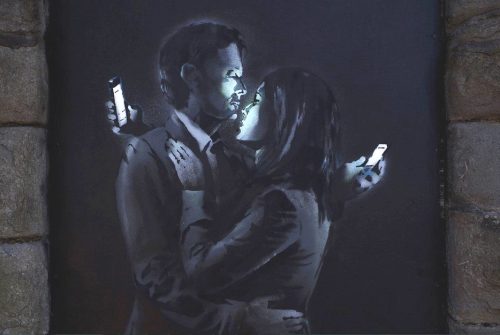

Electoral posters in Japan

A first legal profile is that to be elected one must be a citizen. The recognition of citizenship in robotics and AI can be problematic. It is acquired by birth or by other acts of the will, but can a machine be considered to have a life of its own? Could you do voluntary acts like marrying a human, or being adopted, and thus acquiring citizenship? In addition to doubting the complete voluntary autonomy of robots, Italy would probably operate the limit of respect for public order.

Moreover, citizenship does not entail only rights (e.g. active electorate for all other robots, after having admitted the passive one) but also duties (e.g. contributory obligations). In these cases, it would certainly not be easy to expect the machine to follow the rules, but the manufacturers should be involved.

The issue becomes even more complicated if we think about who should respond to these robots’ actions or opinions. Evidently, they do not have a personal patrimony, which can be attacked as compensation for damages. They would not even be criminally accountable and would be subject to restrictive measures of personal freedom. Manufacturers could de-empower themselves by demonstrating that certain behaviors were held on the basis of their learning or autonomous decisions, with a certain degree of freedom and intentionality.

Sam’s official website

If we read the art. 68 of the Italian Constitution, we also ask ourselves about the possible recognition to the robot candidates of parliamentary immunity. The same applies to the immunity of Heads of State or Government. Evidently having a political exponent who is potentially irresponsible, being a product of man and not endowed with its own patrimony to respond to, it could create problems in having control over it.

A further critical profile may lie in the impartiality of its political statements. Software is inevitably conditioned by the setting of its programmer. This does not necessarily lead to negative outcomes, but in a sensitive and subjective field like the political one, it could do it. Certainly, it could be politically oriented based on the greater profit, in terms of votes, that its political party wants to draw. This also happens with human candidates, but relying on a seemingly perfect machine, always with the right and aesthetically pleasing answer to the human being, could make us forget the doubts about its real transparency.

A recent study by the University of Bath discovered that AI can also learn the prejudices and stereotypes, racist and sexual, of its interlocutors. This could also happen with an affable political robot such as Sam. Thanks to machine learning and the cognitive sciences, it enhances the learning process, but perhaps we have not yet thought about teaching how to “un-learn” or how to change its mind (to get rid of some derives from political thought).

If we believe that the exercise of government functions is simply a mathematical calculation, or the inclusion of a social need in a robot in order to receive an immediate reassuring response, let’s expect many more Sams.

This is a brilliant innovation to rejuvenate the ruling class and present innovative solutions and impact, but it’s also risky because the political ars is made of compromise and responsible choices, embodied by various representatives that we must hold responsible, and not delegate to others.