Facebook is using AI to solve some of its problems: here’s how

8 November 2018 | Written by Filippo Scorza

Facebook’s safety in terms of fake news and inappropriate content has been lacking in the past: now, artificial intelligence could help the tech giant to protect its users.

When we talk about Artificial Intelligence and machine learning, we often feel that these concepts are perceived as destined for a distant future: perhaps we are not aware of what the predictive and decision-making algorithms are doing because they are not concretely tangible.

We read content on the web, we watch some television series or see some interventions and presentations, but perhaps we should stop and reflect on what these technologies do for us every day.

A machine learning algorithm, as we all know, needs large amounts of data to be able to “learn” and generate a set of information in the context in which it operates; at the same time, we know that platforms such as Facebook, Twitter and Google certainly have a lot of choices in this regard.

Mark Zuckerberg, now under suspicion for some time, was forced to cope with waves of trolls, fake accounts, bots, inappropriate content and multiple issues such as fishing and computer security.

Hunting for bots

Cybercriminals employ bots that act like swarms when it comes to creating Facebook accounts: they use fake IP addresses, slow down and accelerate their typing pace to match that of a human being and add each other as real digital friends.

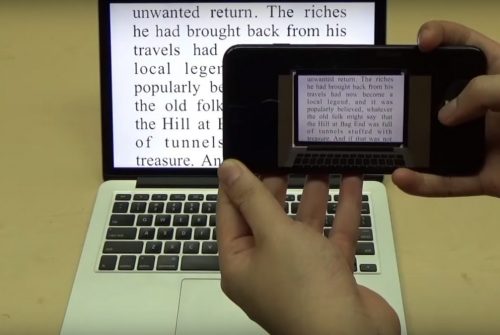

But they have not yet figured out how to simulate the movement of our hand when we hold the smartphone!

Precisely this aspect is what the AI of an Israeli startup, Unbotify, is focusing on.

Unbotify works by understanding behavioral data on devices, such as the speed of movement of the phone when creating an account by analyzing the data produced by the gyroscope.

Its algorithm recognizes these models because it has been trained on thousands of users who have typed, “tapped” and swiped on their devices hundreds of times.

Bots can falsify IP addresses, but they can not simulate how a person physically interacts with a device.

In doing so, Facebook announced in May that it had canceled 583 million fake accounts in the first three months of 2018, in practice half a billion fake users.

But it’s not over here: do you remember the memes?

Well, in March, the social network said it was expanding its news control program to include images and videos after a new form of propaganda started using memes instead of textual fakes to influence users’ thinking, through the manipulation of images and videos.

These news control tools are using AdVerif.ai’s AI: this tool is able to find images that are considered discriminatory or not respecting Facebook’s terms and conditions. Is then able to do a reverse search of the image to see in which other contexts it was published and if it was altered to show something different.

In practice, it is able to tell us if an image has been manipulated or not.

This image, published on the Facebook page of “Vets for Trump” on September 28, 2017, has been digitally modified, but offered as authentic.

The original was taken by Seattle photographer Seahawks Rod Mar, and showed NFL player Michael Bennett performing in the now traditional “post-victory dance” in the Seahawks locker room.

The fake image shows him, instead, while holding an American flag on fire and it was posted on the above page causing a lot of controversy around the player, reaching over 10,000 shares, likes, comments and views.

The digital world we are creating, it seems, needs not only well-defined rules and limits but also human and/or artificial guarantors that can control counterfeit news and the dissemination of offensive, violent and inappropriate content: will we need laws and more severe punishments for the web?

Or maybe the problem is no longer fake pictures, but the fact that people do not care that they are not real?